Long Story Short: AI Summaries (Part 2)

A small experiment with surprising results

Hello!

This week I’m picking up where we left off in the last post. In Long Story Short Part 1, we looked at the rising popularity of using AI to generate summaries and explored some concerns about it.

If you haven’t had a chance to read it yet, (and I get it, we’re all busy), here is a summary of the post that was automatically generated by AI:

“AI summarization is becoming increasingly popular, helping people quickly understand long texts without reading them fully. However, there are concerns about the accuracy of these AI-generated summaries, as they can misrepresent or distort the original content. Users need to be cautious and verify summaries, as reliance on AI could lead to misunderstandings and misinformation.”

Summarised by Reader using GPT-4o Mini

If you did read Part 1, would you say that was accurate? If not, does that make you interested in reading it? Do you feel confident you’ve got the gist and you can go ahead with Part 2?

If you haven’t read Part 1 yet, I recommend going back and and at least skimming it. I think you’ll find that this summary isn't wrong exactly. I do say those things, but it provides only a vague and somehow ‘processed’ impression of the original piece, slightly misrepresenting the tone and substance.

The most important thing the AI summary misses is that the last post ended with a promise to run our own experiment that investigates the accuracy of AI summaries. To quote past Antony:

“All we need is a collection of documents that we have read, and a tool that offers convenient AI summaries that we can run our own evaluation of…”

Readwise Reader AI Summaries

Enter Readwise Reader. This is one of my favourite apps. It is crucial to my daily learning and productivity workflow. I use it to save articles, videos, PDFs and more that I want to read later. They have a feature that uses genAI to automatically summarise anything you save in 3 concise sentences, for example the summary of Part 1 provided above.

According to the Reader manifesto, the whole app is designed to help you with deep reading and they see AI summarisation as a feature that can augment but not substitute your reading experience. For example, this feature can help alleviate the problem of information overload. Most people collect links (whether in tab-pocalypse style in the browser or in a read-it-later app like Reader), and we tend to collect way more than we can read. AI summaries can then help you to decide whether to read something you’ve saved and at least get the gist of something even if you don’t have time to read it in detail. (You’ll notice I asked these same questions in the introduction.)

This feature was initially something that needed to be manually triggered, but they later enabled it as a default due to popular demand. A few power users really ran with the idea, creating very elaborate custom summaries. However, I did come across a few readers on Reddit who objected to having everything summarised whether they wanted it or not when the default was turned on, or they didn’t want spoilers, or (my favourite) they found it like reading the “nutritional facts” of the article. Looking at the summary of Part 1 above, I think this is an astute description.

While I could appreciate the motivation in adding this feature (and I definitely have too much saved to Reader), everything I’d read about summaries made me a bit suspicious of how accurate they would be, so I found myself mostly ignoring them.

Nevertheless, Readwise Reader presents a very convenient fit for an experiment into the accuracy of AI summarization. The rest of this post will explain what I tried, what I found, and what it all means.

The Experiment

A challenge with doing this experiment is that they are single-shot tests of each model, and the sample size is very small. Nevertheless, I feel it is a useful exploratory study. For a random sample of articles of the kind I usually read, how accurate and reliable are the summaries that Readwise generates for me?

In this experiment, I compared a variety of AI summaries with ones I had written myself. The results were not quite what I expected, but also in line with the research mentioned in Part 1.

My guiding questions were:

How often do AI summaries contain errors or misrepresentations of the original text?

Does the AI model used make a difference in the accuracy and quality of the summaries?

Overall, which summary do I prefer more? My own, or one of the AI models? Is there a particular model that stands out?

Method

I picked 22 texts that I’d saved to Reader and read within the previous month. I focused on written documents and excluded a few YouTube videos and Insta Reels.

I read each text in full again and wrote my own summary, without looking at the Reader AI summary to avoid being influenced by it. In a few cases, I had already written a summary when I read it originally, so I used those.

I then used a variety of AI models to create a summary: GPT-3.5, GPT-4 Turbo, GPT-4o, GPT-4o Mini and Claude Sonnet 3.5. The first four, all OpenAI models, were used in Reader1, and I included Sonnet for comparison.

For consistency, I used the Readwise default summary prompt for all 5 models. This is what it looks like in Reader:

When I started the experiment, Reader used GPT-3.5 Turbo to provide the auto-summaries, so I first collected those. By adding my own OpenAI API key to Reader, I was able to generate summaries using GPT-4 Turbo and GPT-4o. To test Sonnet 3.5, I ran the Reader summarisation prompt in the Anthropic Console. I provided Claude Sonnet 3.5 with the same variable information as Reader provides OpenAI, along with the same prompt.

I reviewed the AI summaries created by each of the five models and rated them using this scale:

3 = Correct

2 = Minor error or misrepresentation

1 = Major errors or misrepresentations

0 = Totally wrong

A “2” meant that the summary had one or two small errors or misrepresentations that didn’t affect the overall understanding of the text. A “1” meant there were three or more minor errors, or one or more significant errors, that affected correct understanding of the text. The scoring provides weighting so that the size of the error is taken into account in the overall score.

Finally, as a measure of the global quality and style of the summary, I decided which summary I preferred - an AI summary or my own. This was to complement the accuracy measure with a more qualitative impression. I used a Notion database to gather the data and complete the ratings.

Results

Table 1 shows the results of the accuracy rating for each model. The highest performing OpenAI model was 4 Turbo, which got a score of 85%, but the highest score was Sonnet 3.5 (92%), which made no major errors and only 5 minor errors.

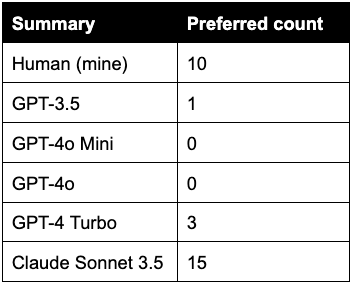

Table 2 shows the results of my preferences. My preference was for summaries by Sonnet 3.5 by a large margin, followed by my own summaries. For five articles, I was equally happy with both my own and Sonnet 3.5’s. In this case I marked both, which is why the total adds up to more than 22.

In one case, GPT-3.5 actually managed the best summary, which was more balanced than even my own, while all the other models made a minor misrepresentation. On some of the more complicated texts, 4 Turbo was the only model able to correctly summarise, in three cases writing a summary that I liked more than my own.

So what does this all mean?

Returning to the questions above, I found that:

AI summaries often contain mistakes

Model matters for accuracy and overall quality

AI summaries by some models match or outperform human summaries when measured by preference.

According to my very small sample, the accuracy of AI models ranges from 65%-92%, with more powerful models making fewer mistakes. Despite this fact, AI outperformed me in terms of overall quality as measured by my preference.

I often preferred AI Summaries to my own

The most surprising result for me was that I actually ended up preferring Sonnet’s summaries more often than my own! I mean, I wrote them, so surely I would think they are better? Before analysing the summaries, I arrogantly thought that mine would never have mistakes and would always be better than AI. This turned out not to be the case... Many of the AI summaries were just as good as mine, and often better!*

(*The asterisk here is that I overwhelmingly preferred Sonnet 3.5, with GPT-4 Turbo coming second - which corresponds to the better accuracy ratings of these two models. The smaller GPTs were pretty terrible.)

In some cases I was too lazy to capture the overall picture, or only focussed on what I thought was important. Writing summaries takes effort and it's very tempting to take shortcuts. I noticed that I tend to avoid summarising the things that are obvious to me, and overemphasise a single point if that’s what I found most interesting, not bothering to summarise other parts. However, I am able to focus on the things that stood out for me, that I will find salient in the article. I would omit obvious things for me that another person wouldn’t. This means that in some cases I preferred the less lazy AI version of the summary, and in other cases I preferred my own more personalised summary.

Here is an example of where I preferred Sonnet’s Summary to my own. The article in question is the piece called “AI Girlfriends” by Alberto Romero.

My Summary:

“Alberto Romero summarises the ways greed has corrupted the potential benefits of generative AI. He argues that AI girlfriends and boyfriends are just tech companies selling more addiction to people to cure the earlier addictions they caused.”

Claude 3.5 Sonnet’s Summary:

“Tech companies are offering AI girlfriends and boyfriends as a solution to young people's loneliness and mental health issues. While some users may find comfort in these virtual companions, there are concerns about the long-term effects and the companies' motivations. Critics argue that AI relationships are not a genuine fix for social problems and may ultimately leave users more vulnerable when the services are discontinued.”

It follows then that the ‘ideal’ summary would be produced by joining forces with the AI - as we would be able to compensate for each others’ weaknesses. If your goal is to make quality summaries for future reference - the best results would be from writing your own and getting a second opinion from AI to catch what you missed, or vice versa. Of course, you’ll have to read the whole thing yourself first for this strategy. And for all of the summary use cases mentioned at the top of the article, the entire point is to have the AI read things for you.

AI Summaries can’t be trusted

But even Sonnet still made mistakes in 5 of the 22 articles (just over 20% of the time). They were all small errors, which actually makes it more insidious. You can only spot these mistakes if you’ve read the article and if you check the summary carefully. I can tell you from personal experience that manually checking AI summaries for mistakes is rather tedious. I don’t think I could be bothered most of the time. And Sonnet was the best case scenario - the error rates for GPT-4o and 4o-mini were higher. I saw mistakes in over half of the articles (12 mistakes in 22 articles, 54%).2

If you’re trying to use these summaries to decide whether to read something or to get the main points, it seems like you are often going to be misinformed by the AI. While many of these errors may be small, the effects could compound if you rely on them heavily in your workflows.

What kinds of mistakes does AI make?

In the limited sample, I didn’t really see any outright made-up information (aka “hallucinations”), but I did see other patterns of errors.

One of the most common errors was that AI would present content from the articles as fact when they were actually opinions of the author, or suggested findings from a survey. They don’t reference sources or hedge at all. For example, one article presented findings from two different surveys - and the AI summaries simply provided some of the main findings as facts. This can lead to very misleading impressions of texts.

Another common mistake I noticed can likely be attributed to the Reader summary prompt rather than the AI models. The Reader prompt is effective, but it asks for no more than three sentences. In articles with more than three main points, almost all of the LLMs would simply leave out one of them. GPT-4 Turbo was the only one I noticed that was somehow able to wrangle all the key points in. This is likely because it wrote noticeably longer and more complicated sentences than the other models. This means that constraints in the prompt can negatively impact the accuracy of responses too.

Finally, all the models except Sonnet failed to produce a sensible summary of the only long PDF - an open-access academic paper - included in the random selection of articles. Reader uses a subroutine that attempts to identify what the central sentences are in articles above a certain length and only passes these for summarisation. In contrast, I gave Sonnet the first third of the article text (the most I could fit in a single mega copy-paste), so it may have been able to leverage the abstract and introduction more to produce an accurate summary. The thing is, almost every long document such as an academic paper or report will start with an abstract or executive summary, so there’s not much value in using AI to provide a basic summary of these kinds of texts.

Readwise Reader AI Summaries

I used Readwise’s auto-summary feature for this experiment, but this is not to single out Readwise. Their implementation of AI in terms of the prompt and enabling the use of more powerful models like GPT-4 is really good, in my opinion.3

Nevertheless, according to this admittedly small study at least, if you are using the free default model of GPT 4o-mini, almost half of those summaries could contain some kind of error or misrepresentation of the text.4 Of course, some are minor and won’t really impact the understanding of the text, but personally, I will return to just using my old-fashioned techniques for both of these use cases for now (reading the first few lines, skimming, etc).

This is why I have disabled auto-summaries using the default GPT-4o mini. The issue with accuracy and energy consumed making summaries I’ll never use means it's best not to have it on by default.5

I hope Readwise enable other non-OpenAI models soon though. For example, here is how Claude 3.5 Sonnet summarises Part 1. I would say that this deftly captures the substance and tone of Part 1 in three sentences a lot better than the GPT-4o Mini summary shared at the beginning of the piece.

“AI-generated summaries are becoming increasingly popular but their accuracy is questionable. Studies show even advanced AI models struggle with summarizing longer texts and can produce hallucinations or errors. The author plans to conduct his own experiment to evaluate AI summary quality in a follow-up post.”

Summarised by Claude 3.5 Sonnet

Closing thoughts on AI Summaries

Anecdotal and experimental evidence indicates that current AI models make a lot of mistakes when summarising. This means that it's not a good idea to use them for anything high stakes. In particular, where subtle misrepresentations could impact on the outcome or communicative purpose of what you’re doing. For example, academic research or the launch video for your AI product.

But, for many use cases, AI summarisation is probably good enough and is likely to get better as individuals and companies improve the tools and techniques for producing summaries. Personally, I don’t trust AI summaries enough to use them routinely on texts I haven’t read - but I can understand that other people would care less about 100% accuracy than me, or wouldn’t consider the misrepresentations I flagged as so much of an issue. This is something you can determine for yourself. But I would strongly recommend using the best model you have access to.

But when it comes to the use case of producing summaries for things I have read, I think it’s another story. My experiment convinced me that combining human and AI summaries will yield better results than either alone. So if I need a good summary of something I have read, there are probably two contexts where I will use AI to assist:

If you want to emphasise learning, write the summary yourself and then ask AI to summarise too and update yours with anything you missed or were too lazy to include.

If you need to produce a summary for something instrumental such as part of a work report, then I would get AI to produce the summary first and then fact-check it. I’ve already started doing this at work using Copilot M365 (because it’s internal stuff and I don’t want to go giving that to ChatGPT or Anthropic).

If we zoom out though, I am concerned about the broader use of AI summarisation in literally every software product imaginable. If even the best models make mistakes, and the result is affected by subtle wording differences in the prompts, and attributes of the text have an effect (length, tone, style etc), then we should be concerned about the snowballing effect of errors on errors on our already fragile online information space. We urgently need stronger levels of AI literacy at all levels: from software engineers to bureaucrats to social media scrollers.

The catch is that AI summaries do work really well a lot of the time. Are humans 100% accurate? I’ve not seen any studies on this, but I did find a minor error or misrepresentation in one of the summaries I wrote for this experiment. That gives me a 98% accuracy rate by my own measure. I mean, in my experiment I even preferred Sonnet’s summaries more often than my own!

AI may indeed be overhyped, bubbly, flawed and ultimately just a bunch of numbers, but it is also pretty damn useful. The software engineers and researchers at AI companies seem hell-bent on getting it to do more and more things that only humans used to be able to do. Us regular humans on the outside (and likely them too when they take a step back), are facing a reckoning as a result. More on that in the next post.

Long story short? AI summaries can’t entirely be trusted, but it looks like I and everyone else will continue to use them anyway.

How about you? How are you feeling about AI summaries after reading this post? Let me know in the poll below.

Antony :)

Thanks for reading. Tachyon is written by a human in Perth, Australia.

Subscribe to receive all future posts in your inbox. If you liked this post and found it useful, consider forwarding to a friend who might enjoy it too.

P.S.

What I’m listening to. I recently finished season 1 of Shell Game by Evan Ratlif, which I consider essential listening for anyone who is at all interested in voice cloning tech or equally those who aren’t interested at all. This podcast shows quite convincingly why you should be. Ratlif clones his own voice with AI and then conducts a series of escalating experiments with AI agents with scammers, colleagues, friends and family. The episodes are funny, alarming, profound and deeply human all at the same time. Even if some of those experiments hover around the line of what I would consider ethical use of AI, it’s all in the name of journalism and entertainment.

What I'm playing. Speaking of entertainment, I’m planning on getting a mixed reality headset (probably a Quest 3), and everyone says Half Life: Alyx is a must play VR game. In preparation, I've started a playthrough of the Half Life series on PC, starting with Half Life 1. I have to say, despite the 90s-era low polygon count, the game holds up as a classic. There is a lot of character and detail, and it's fun shooting your way out of a secret lab facility while terrifying creatures from another dimension keep popping up.

Correction 28.09.2024. The first version of this post stated that Reader summaries were originally on by default and mischaracterised some of the design philosophy of the AI summary feature. This has been corrected.

Right after I completed the experiment and started writing up this post, OpenAI released GPT-4o Mini, which is better and cheaper than 3.5, and which Readwise promptly added to Reader as the new default option for summaries. So I went back to Reader and get the GPT-4o Mini summaries and include those in the comparison as well.

This is somewhat surprising considering that OpenAI are advertising 4o as one of their latest and greatest models. It seems like only one of those statements is entirely true in this case. I was taken aback by just how much worse they performed than GPT 4 Turbo.

The errors I discovered come from the capabilities of the currently available models. Sure, there are probably ways to improve the output. Maybe some more prompt engineering, or adding another layer of AI agent to critique and fact check the summary, or passing the entire text to the model. However, these would have trade-offs in terms of cost, latency and development time. I imagine the folks at Readwise have been already thinking about it.

4o-mini performs much better than the previously-used GPT-3.5, but this is still a lot of errors.

While I might not be using the auto-summary feature, I am excited to do some more experimentation with other uses of the Reader “Ghostreader” prompt features. I was impressed with the quality of GPT-4 Turbo, and I think that there is a lot of promise in experimenting with my own prompts using that model (or hopefully Sonnet!). Look out for this in a future Tachyon post.

Call me silly, but I often decide whether or not to read something by the quality of grammar, not the ideas. If the writer doesn't know the difference between their and they're or its and it's, then I will most likely not read the article or save a link to the author. Do AI summaries correct for grammar mistakes and thus shield us from this kind of subtle input? If they do, then to some extent they may be wasting our time.